When we look at screencasts, what are we seeing? When one follows the animated graphical user interface of the programs whilst someone offers explanations of it – is that reality? Are screencasts a new invention? Some people claim that they have existed since the early 2000s – but they might actually be older … These questions will be explored based on two examples. The first of these is an approximately 90-minute demo in which Douglas Engelbart, in San Francisco in the year 1968, presented the first interactive graphical user interface as a concept. The second example is an instalment of Let’s Play from 2016, in which the online space game EVE Online plays a central role. The question remains: what do we see when we watch screencasts on a computer screen?

Nothing is new, however new it may appear

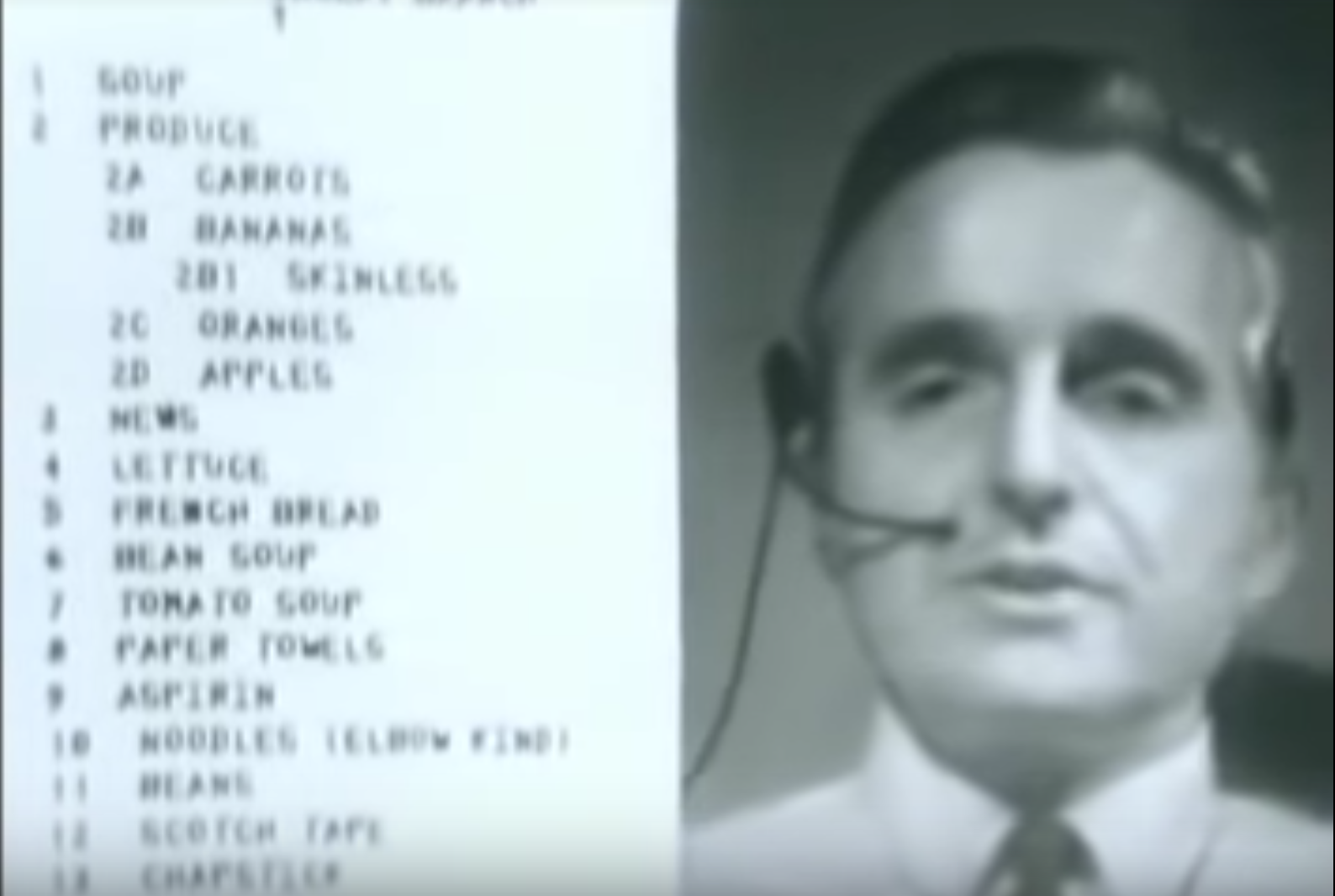

The computer that controlled what was presumably the first graphical user interface as we understand the concept today was the size of several cupboards and stood in a computer centre 48 km away from the lecture halls of the 1968 Fall Joint Computer Conference in San Francisco. The SDS 940 computer was connected by telephone cable to the conference site, where all that could be seen was a keyboard, a screen terminal for inputs, and a mouse (newly invented for the occasion). The SDS 940’s screen content was transmitted from the computer centre by a directional radio connection, filmed by a camera placed directly in front of the screen.

For the first time in their lives, a hundred conference attendees saw a graphical user interface significantly different from the previous practice of inputting commands in text mode. Using the split-screen technique a 6 × 6.5 meter cinema screen showed computer scientist Douglas Engelbart and the graphical user interface together in one frame. He demonstrated mouse-window interaction, a cursor activating various programs, text processing software, hyperlinks, and the first network node, which would later become the Internet. This was a screen cast by any definition, based on the computer in transition from calculating machine to computing medium.

Computability and animation

The fact that computers did not have graphical user interfaces from the very beginning is down to one thing only: a lack of computing capacity. The idea was formulated by 1945 at the latest, with the creation of the information processing machine MEMEX by the science manager Vannevar Bush, just a few years after the first electronic computers.

It was not until the late 1960s that there began to be enough computing capacity available to compute a graphical user interface. After all, this is what it is – a constantly computed animation, within which a colour value has to be computed for every pixel on the screen. A mouse cursor, for instance, was the computation of multiple adjacent pixels, whose colour value is given as “black” (RGB 0:0:0). The movement of the mouse cursor takes place as an animation in a specific direction, with the pixels that shortly beforehand represented “white” (RGB 255:255:255) being altered to black. In order to create an animation that appears smooth to the human eye, all the pixels on the screen must be re-computed at least 15 times a second. By comparison, modern monitors can easily handle 100 Hz: 100 new screen computations per second. In the late 1960s, this was unimaginable.

It is complicated

If every pixel is constantly being recomputed, is what we are seeing real? Or is it virtual? Is there, in fact, a difference? To allow us to explore these questions further, two complicated terms should be introduced here, although they will be used in a simplified way. Unfortunately, this is unavoidable.

Firstly, there is the index. An index connotes that a sign has a direct relation to that which it designates. Thus, indexicality is the situation in which a sign refers to an object together with its context. A photograph of a heron can be perceived indexically by the viewer because it also depicts the heron’s context: a lake, reeds etc. Human perception ascribes a higher degree of truthfulness to what is depicted based upon indexicality, especially with regard to photo, film, or video.

By contrast, there is the icon, which is linked to that which it designates through similarity or as a metaphor. The folder icon in a graphical user interface, for instance, does not refer to a real folder, but to the abstracted concept of a folder. It is abstracted in the sense that it is not a depiction of a specific folder, but a graphical outline of this form, a reconstruction. It is also abstract in the sense that it is a metaphor directed toward human beings and nothing more. To the operating system the metaphor means nothing. From the perspective of the operating system, an accumulation of data is located at the storage address 17A0b:1334f – not a folder.

EVE-Online – a player graphical user interface

So, what kind of picture do we encounter in a graphical user interface? This is already apparent: the iconic image – a visualised data metaphor. This also applies to games like EVE Online, in which the interconnected computers of thousands of gamers compute and animate pixels in real time. No real spaceships whose existence could be proved by pictures fly through the virtual space of the game – their indexicality is approximately zero. Instead of indicating something that was there, the animated images in EVE Online refer to something entirely different: they refer to something collectively imagined (the potential actions offered by spaceships), to something concrete in the form of a complex infrastructure of computer centre databases, data and models, of networks, and of individual PCs in players’ homes, but also an infrastructure of administrators, programmers, and community- and support personnel. The images produced by this complex configuration – the graphical user interfaces – are iconic.

In the “Let’s Plays” of EVE Online, we see the person who is doing the explaining included in the picture (frequently, we only hear their voices). Even if it is a digital reproduction, the speaker did in fact once speak. Thus, his/her existence is indexically anchored.

Hybrids and testimony

In screencasts, we encounter two kinds of images: firstly, there is an indexical image that shows the lecturer as they speak, and, secondly, there is a generated image, an animation that is constantly recalculated and is based upon models and data. The indexical image shows a situation that took place as shown: someone sat in a room and talked whilst being filmed with the camera. It is represented as determinable, something that happened in such a way and not in any other way – a “stability in the image” (Mersch 2006, 102).

The generated image, on the other hand, is concerned with potential – what is shown does not necessarily have to appear the way that we are seeing it. The data and models of EVE Online or a graphical user interface can always be visualised in a different way, restricted only by convention. As soon as we find ourselves within a computing medium, the image is no longer a question of reproducing reality. Instead, it is a question of design. In a screencast, the two are interwoven and superimposed on one another. In this hybrid format, speakers fulfil a specific media function. As witnesses anchored in reality, they add credibility to an iconic, constantly computed animation known as the graphical user interface.